Every system here runs in the real world — with real users, real data, and real consequences when something breaks.

01Resso.ai

Founding EngineerReal-Time AI Interview Intelligence

P(hire | audio, transcript, t) = σ(Wₜhₜ + b)

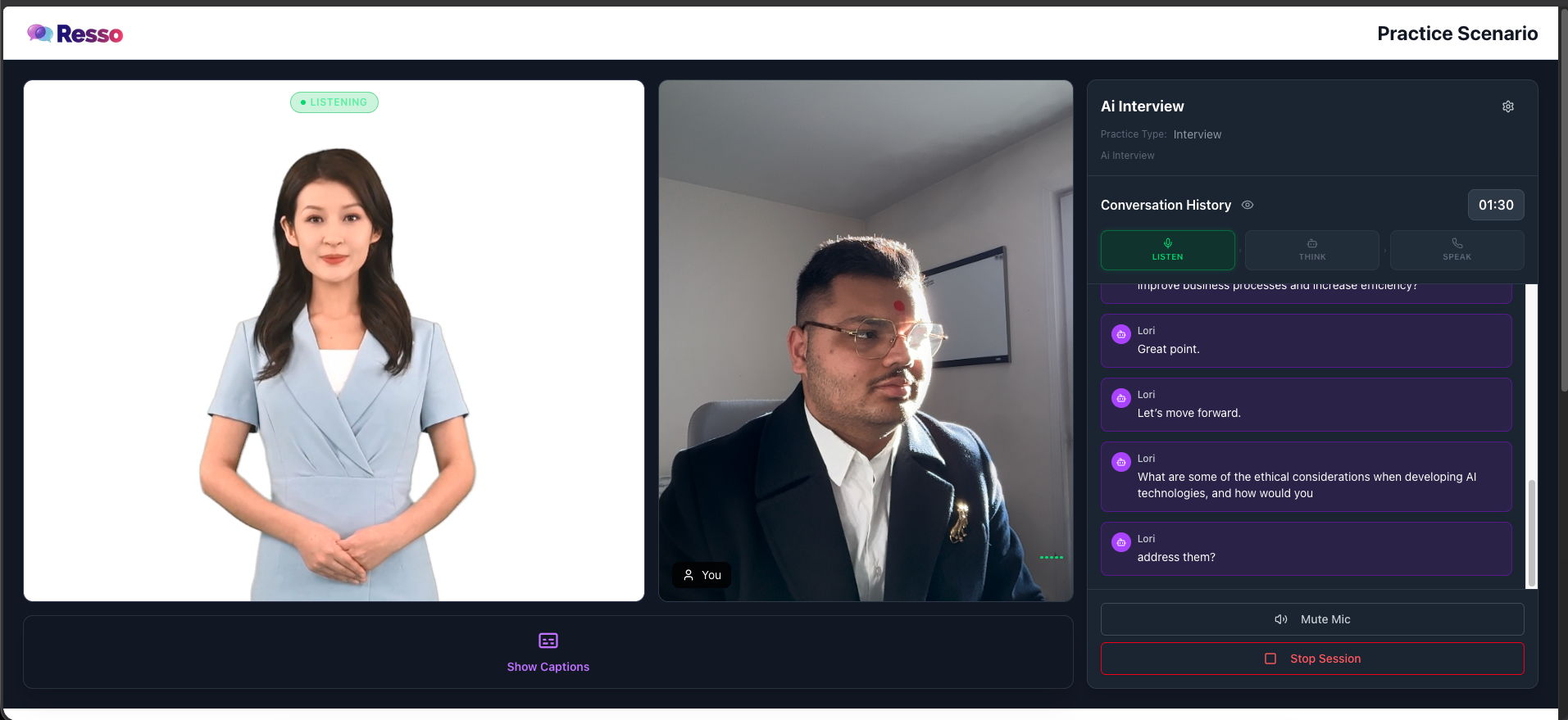

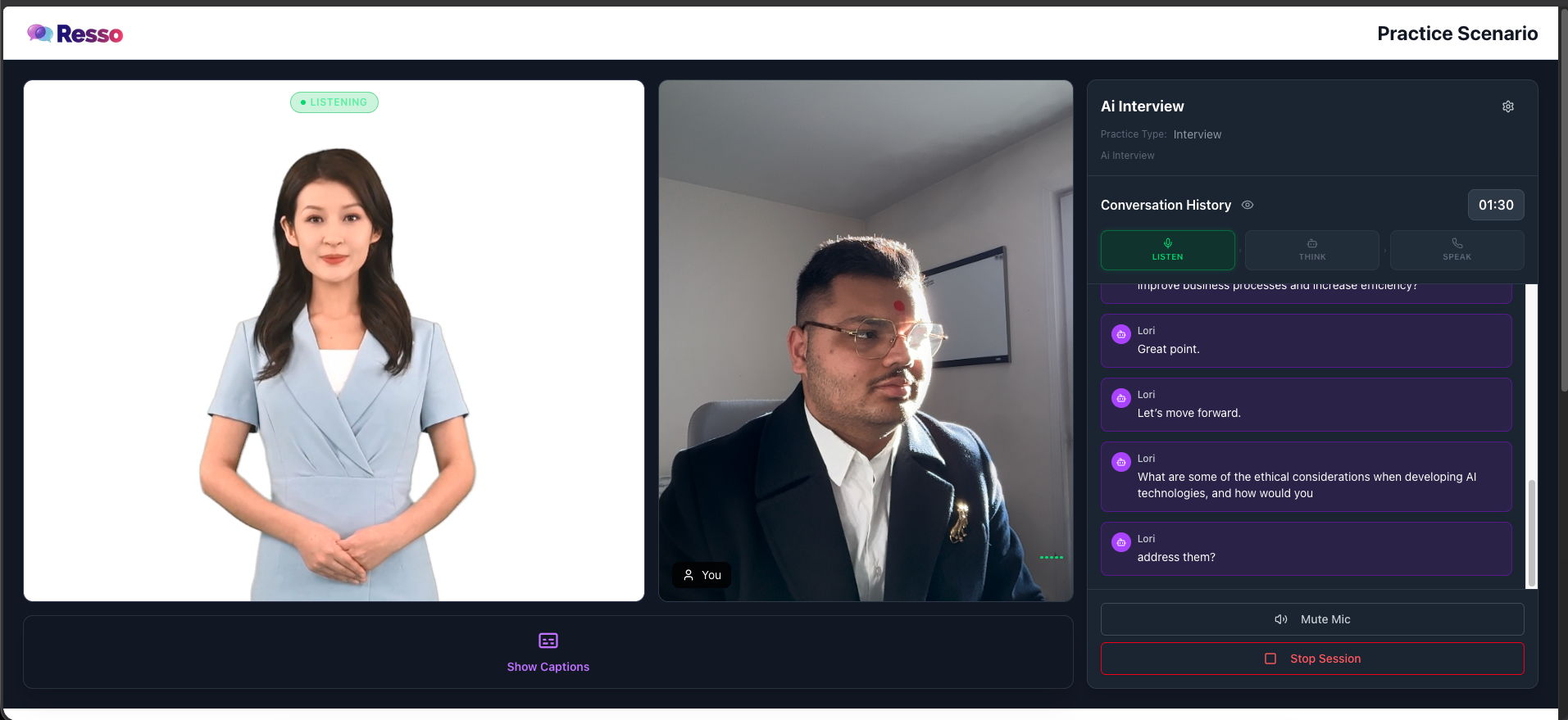

Live Screenshots

Resso.ai — Real-time interview scoring platformWebRTC · PyTorch · ONNX · AWS

Built the entire production ML platform from scratch. WebRTC audio capture at 8 kHz, speaker diarization pipeline separating candidate and interviewer voices in real time, NLP feature extraction across prosody, semantics and pace, and live hire-probability scoring — all streaming inference at sub-2-second latency during a live interview. The AI rates conversations as they happen, not after.

Designed the talking AI avatar system: lip-sync audio-to-viseme mapping, WebSocket event bus, and on-device model quantisation for smooth real-time video rendering. The system processes every spoken word into actionable structured signals.

Inference latency: 8s → <2s · Job placement rate +45% · Live during interview

PyTorchWebRTCSpeaker DiarizationNLPDockerAWSMLOpsONNX

02Corol.org & NunaFab

ML EngineerUHPC Strength Prediction · ML for Structural Engineering

ŷ = Σ wₖfₖ(X) + ε SHAP: φᵢ = E[f(X)|Xᵢ] − E[f(X)]

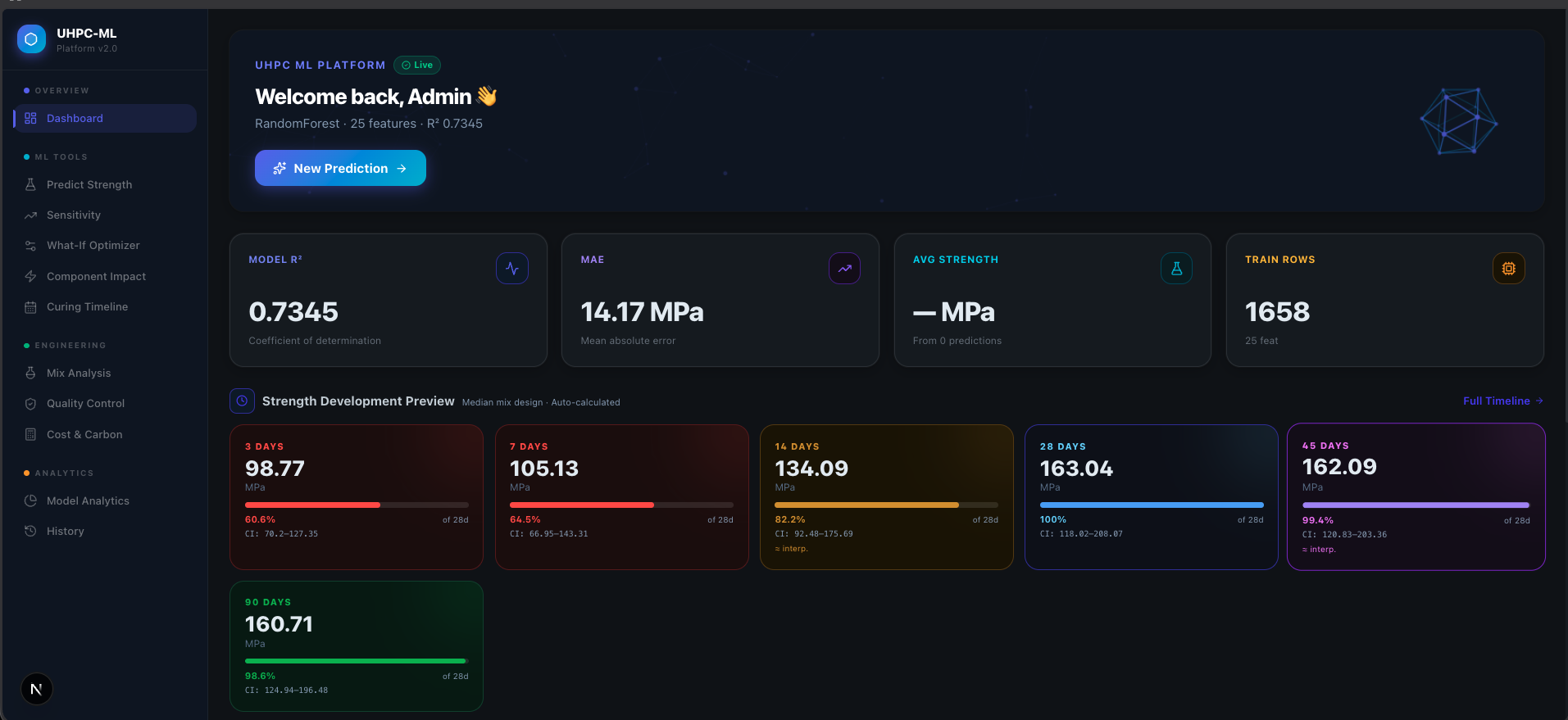

Live Screenshots

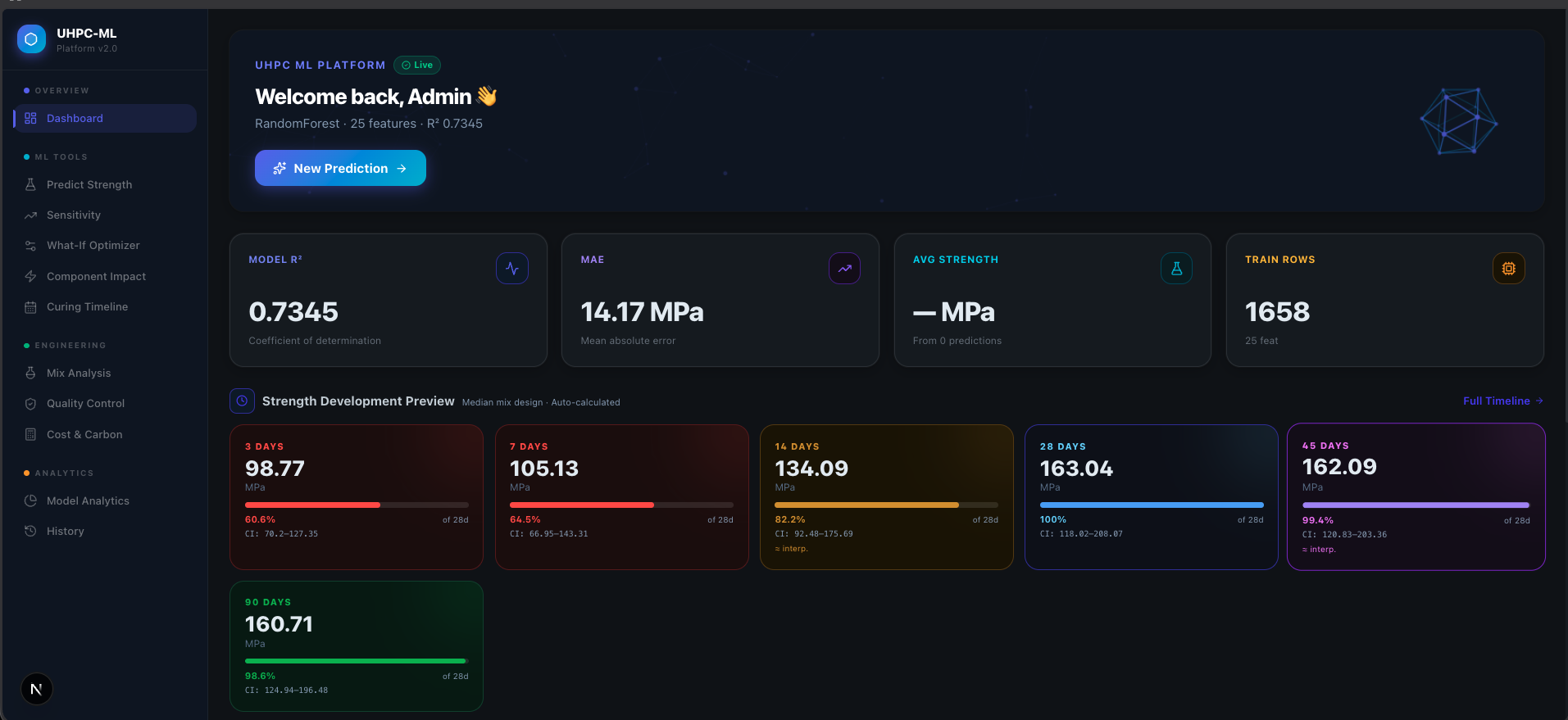

UHPC formulation prediction platformXGBoost · SHAP attribution · R²=0.89

Ultra-High Performance Concrete (UHPC) meets machine learning. Working with Corol.org and NunaFab, I built compressive strength prediction models for UHPC mix designs — telling structural engineers not just what mix achieves target strength, but WHY each constituent (water-cement ratio, silica fume, fibre dosage, curing age) drives the outcome. The dataset was only 200 rows. Transfer learning from related concrete domains, aggressive feature engineering, and an SHAP-explainable gradient-boosting ensemble got R² to 0.89.

SHAP attribution made the model interpretable: engineers saw exact feature impact values (φᵢ) for each mix ingredient — silica fume contribution, fibre reinforcement effect, W/C ratio influence. Reduced physical lab testing cycles from weeks to a single afternoon. Screened hundreds of UHPC formulations computationally before any concrete was poured.

Lab cycles: weeks → one afternoon · 100s of mixes screened computationally · R² = 0.89 · SHAP-explainable

SHAPXGBoostScikit-learnTransfer LearningEnsembleFeature Engineering

01Lawline.tech

AI EngineerLIVE SAASLegal AI Platform for Rogers · Live SaaS · $1M Investment Conversation

E(doc) = LLM(top-k(HNSW(chunk)) + clause_template) → {party, obligation, risk, date}

Live Screenshots

Lawline.tech — Live SaaS productFine-tuned LLM · HNSW · FastAPI

Built the AI core for Lawline.tech — a Canadian legal research platform serving attorneys who cannot use any cloud AI due to attorney-client privilege. Built a fully local RAG stack: HNSW vector store over Canadian legal corpora, BGE cross-encoder reranker, GGUF-quantized local LLM — zero data ever leaves the office. Sub-4% hallucination rate on legal eval sets. Now in an active $1M investment conversation with the President of Rogers for enterprise licensing across Rogers' legal and compliance teams.

Designed the full pipeline: PDF ingestion → semantic chunking (512-token, 128 overlap) → local embeddings → HNSW index → top-K reranked retrieval → GGUF LLM response. Built confidence-gated output: low-confidence answers route to human review instead of surfacing to attorneys. The architecture became the sales pitch — attorneys demo'd the zero-outbound-packets screen to their law society contacts.

Sub-4% hallucination · Air-gapped · 0 bytes leave the office · $1M Rogers President conversation

Legal AIFine-tuningDocument ParsingHNSWFastAPITypeScriptONNX

02MCP Integration Server

BuilderUniversal Agentic Tool Layer

Agent(t) → [LLM + context] → MCP → {toolₙ} → structured_result → LLM

Every enterprise tool lives in a silo — Slack, CRMs, databases, internal APIs. AI agents need one standard to speak to all of them. I built a production MCP (Model Context Protocol) server that acts as the universal backbone. Write one integration, reach everything. The agent calls MCP; MCP speaks to the world. This is how agentic behaviour is triggered in real-world systems: LLM receives context → determines tool needed → MCP routes call → returns structured result → LLM continues reasoning.

Built multi-tool orchestration: parallel tool calls, retry logic, structured output parsing, and context memory integration so agents remember previous tool results across a session.

One server · N integrations · Agentic pipelines with persistent context memory

MCP ProtocolTypeScriptNode.jsTool UseAgentic AIContext Memory

03Vadtal — Vector DB Platform

AI ArchitectOn-Premise RAG · Private AI · Zero Data Egress

R(q) = top-k(cosine(E(q), Eᵢ)) → LLM(prompt + context)

A religious organisation managing thousands of donors needed AI search but couldn't send a single byte to the cloud. I designed and built a complete on-premise RAG stack for Vadtal: quantized LLM running on 16 GB RAM using GGUF format, a custom HNSW vector store with semantic chunking and metadata filtering, cosine similarity scoring, and a FastAPI inference server. Everything — embedding, retrieval, generation — runs locally.

Built the full RAG pipeline: document ingestion → semantic chunking → embedding (local model) → HNSW index → top-k retrieval with cosine similarity → context injection → LLM response. Sub-1s end-to-end query latency, 100% private, works offline.

Sub-1s queries · 100% private · Fully offline · 16 GB RAM footprint

Local LLMGGUFHNSWVector DBONNXFastAPISemantic Chunking

04Lost and Found

Full-Stack EngineerTTC · Transit Capstone · Pitching to TTC Director — May 2026

match(claim, item) = cosine(E(desc_claim), E(desc_item)) > θ → notify_owner

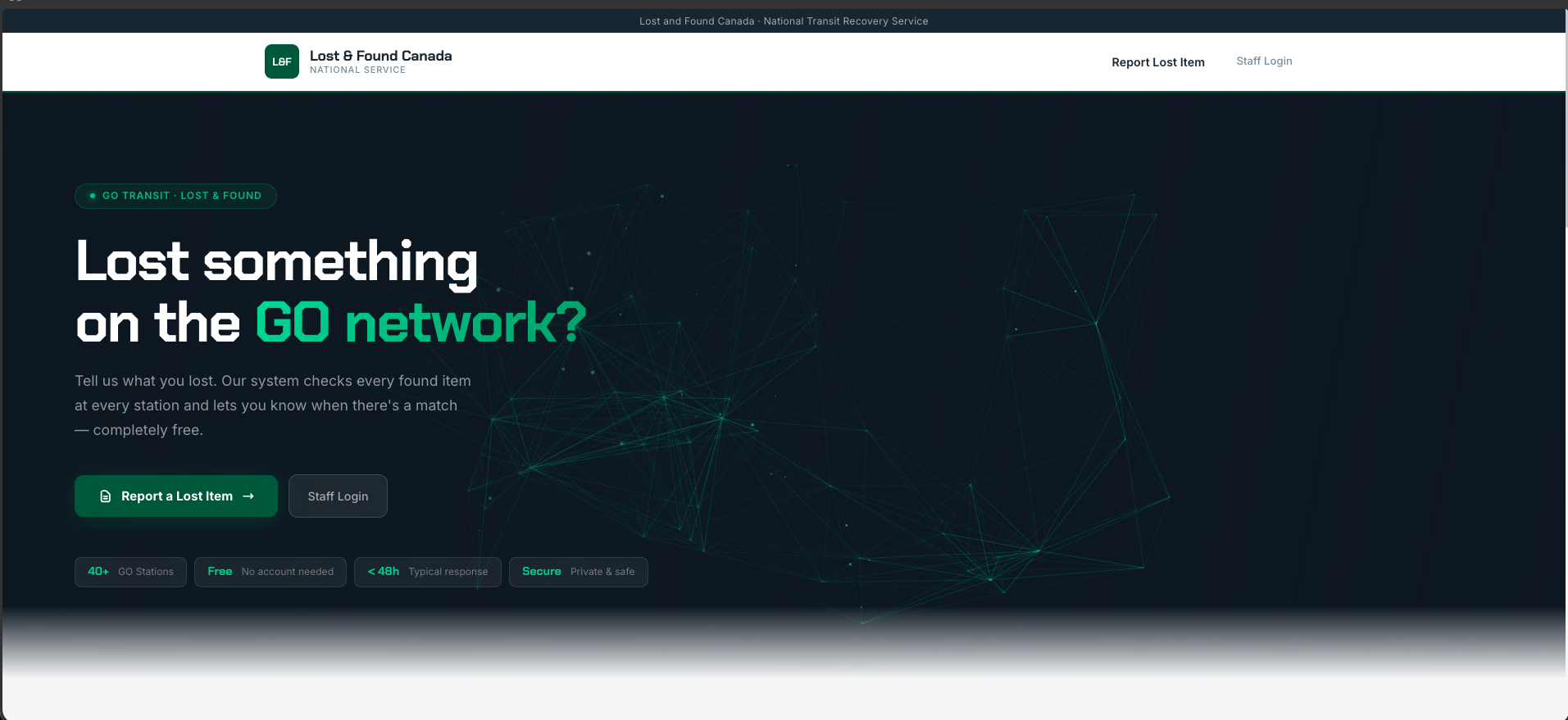

Live Screenshots

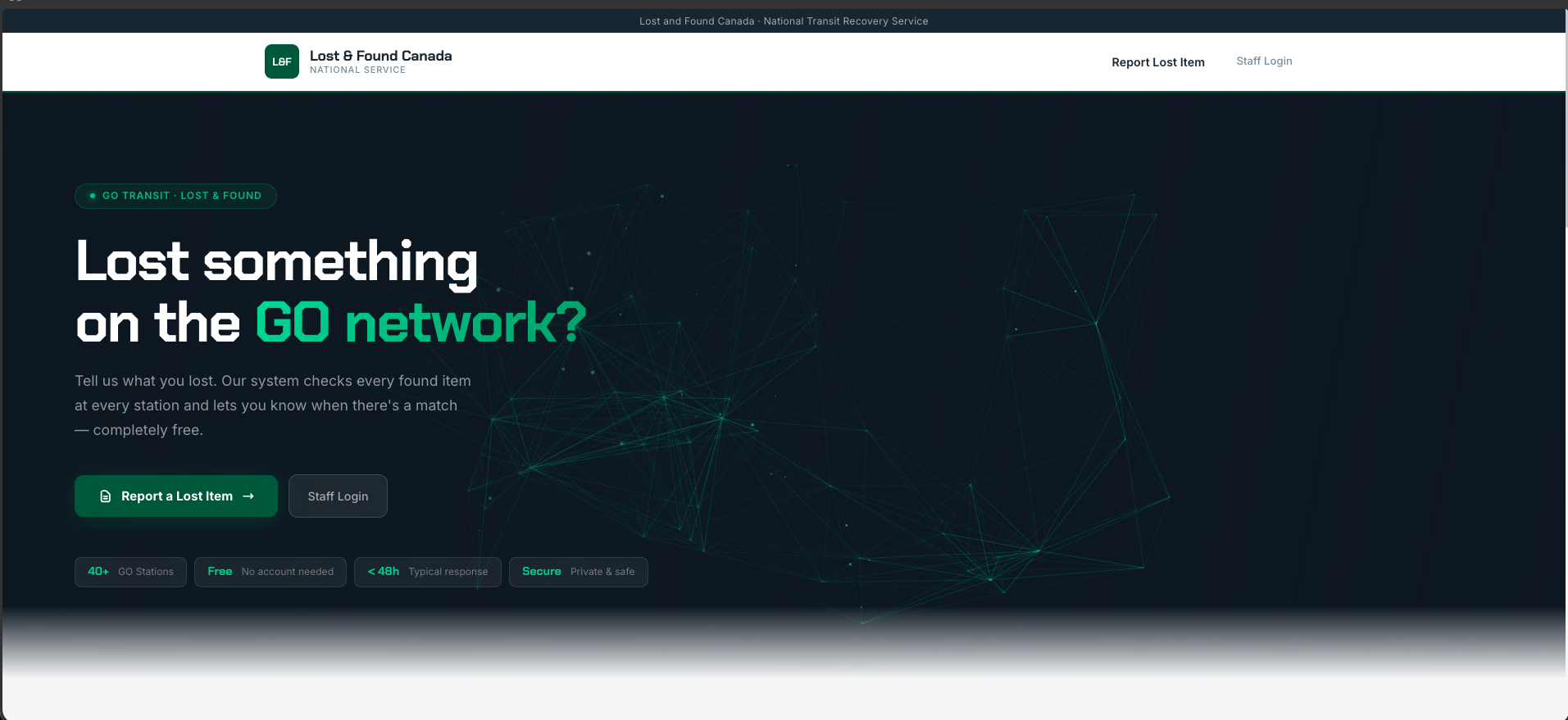

TTC Lost & Found — System overviewFull-stack · AI similarity matching engine

Built a complete digital Lost & Found system for the Toronto Transit Commission — one of North America's largest transit networks serving 1.7M daily riders. The platform digitizes the entire claim lifecycle: TTC staff report found items via a mobile app, each item gets a unique QR-tagged scan record, and owners submit claims through a mobile-first portal. An AI similarity-matching engine connects found items to incoming claims using description embeddings. Pitching this to the TTC Director in May 2026.

Full pipeline: mobile item reporting → QR generation → owner claim portal → AI description similarity matching → staff approval dashboard. Built for TTC's operational constraints — works on spotty transit WiFi, handles hundreds of daily items, fully auditable claim history. Production-ready architecture, not a school demo.

Full claim lifecycle · AI item matching · 1.7M riders · Mobile-first · Pitching to TTC Director May 2026

Next.jsTypeScriptAI MatchingQR CodeMobile-FirstPostgreSQLFastAPI